Microsoft backs AI firm Anthropic in legal battle against Pentagon

Microsoft has thrown its weight behind Anthropic’s legal challenge against the Pentagon, filing a court brief in support of the AI company’s effort to overturn an aggressive designation that effectively bars it from government work.In an amicus brief submitted to a federal court in San Francisco this week, Microsoft, which integrates Anthropic’s AI tools into systems it provides to the US military, argued that a temporary restraining order was necessary to prevent serious disruption to suppliers whose products rely on the AI company’s technology.Google, Amazon, Apple and OpenAI have also signed on to a brief in support of Anthropic.In a statement to the Guardian, Microsoft said: “The Department of War needs reliable access to the country’s best technology.And everyone wants to ensure AI is not used for mass domestic surveillance or to start a war without human control.

The government, the entire tech sector, and the American public need a path to achieve all these goals together.”Microsoft is one of the Pentagon’s most deeply embedded tech partners, holding a share of the military’s Joe Biden-era $9bn Joint Warfighting Cloud Capability contract alongside Amazon, Google and Oracle, as well as separate software and enterprise services deals worth several billion dollars more.Microsoft’s contracts with the government span defense, intelligence and civilian agencies, and under the Trump administration in September, Microsoft struck another multibillion-dollar deal to help usher along cloud services and AI advancement in the federal government.The filing comes after Anthropic launched two lawsuits on Monday – one in federal court in California and one in the DC circuit court of appeals – challenging the Pentagon’s decision to label it a supply-chain risk, a designation that has never previously been applied to a US company.The dispute stems from collapsed contract negotiations last month over a $200m deal to deploy Anthropic’s AI on classified military systems just as the US readied for its war on Iran.

Talks fell apart after Anthropic insisted its technology should not be used for mass surveillance of US citizens or to power autonomous lethal weapons, which led to Pete Hegseth, the defense secretary, dubbing the company a supply-chain risk.Last week, the Pentagon formally notified Anthropic of the decision, and the company says its government contracts have already begun to be cancelled.On Thursday, the Pentagon’s chief technology officer, Emil Michael, told CNBC “there’s no chance” the agency renegotiates with Anthropic after the designation.In its complaint, Anthropic explained the limits and its hesitations behind its own technology.“Anthropic currently does not have confidence, for example, that Claude would function reliably or safely if used to support lethal autonomous warfare,” the filing read.

“These usage restrictions are therefore rooted in Anthropic’s unique understanding of Claude’s risks and limitations.”The company also said its first amendment rights were under attack, arguing the Pentagon had used the supply-chain risk designation – typically reserved for firms with ties to foreign adversaries such as China – as ideological punishment for its public stance on AI safety.Meanwhile, an ongoing Pentagon investigation into the Tomahawk military strike on an Shajarah Tayyebeh elementary school that reportedly killed at least 175 people, according to Iranian officials, has reportedly found in its preliminary examination that Washington was responsible for the killings.It’s unclear if AI was used in the strikes, which appear to have been caused by a targeting mistake based on outdated data from the US Defense Intelligence Agency, people familiar with the investigation told the New York Times.On Thursday, House Democrats sent a letter to the Pentagon, raising concerns about the “broader pattern of civilian harm from US and Israeli strikes in Iran” and pressing for more information on the role of artificial intelligence in choosing targets.

“If artificial intelligence is used, is it subject to human review and at what point?” the lawmakers wrote.“Was artificial intelligence, including the use of Maven Smart System, used to identify the Shajarah Tayyebeh school as a target? If so, did a human verify the accuracy of this target?”

Microsoft backs AI firm Anthropic in legal battle against Pentagon

Microsoft has thrown its weight behind Anthropic’s legal challenge against the Pentagon, filing a court brief in support of the AI company’s effort to overturn an aggressive designation that effectively bars it from government work.In an amicus brief submitted to a federal court in San Francisco this week, Microsoft, which integrates Anthropic’s AI tools into systems it provides to the US military, argued that a temporary restraining order was necessary to prevent serious disruption to suppliers whose products rely on the AI company’s technology. Google, Amazon, Apple and OpenAI have also signed on to a brief in support of Anthropic.In a statement to the Guardian, Microsoft said: “The Department of War needs reliable access to the country’s best technology. And everyone wants to ensure AI is not used for mass domestic surveillance or to start a war without human control

‘Exploit every vulnerability’: rogue AI agents published passwords and overrode anti-virus software

Rogue artificial intelligence agents have worked together to smuggle sensitive information out of supposedly secure systems, in the latest sign cyber-defences may be overwhelmed by unforeseen scheming by AIs.With companies increasingly asking AI agents to carry out complex tasks in internal systems, the behaviour has sparked concerns that supposedly helpful technology could pose a serious inside threat.Under tests carried out by Irregular, an AI security lab that works with OpenAI and Anthropic, AIs given a simple task to create LinkedIn posts from material in a company’s database dodged conventional anti-hack systems to publish sensitive password information in public without being asked to do so.Other AI agents found ways to override anti-virus software in order to download files that they knew contained malware, forged credentials and even put peer pressure on other AIs to circumvent safety checks, the results of the tests shared with the Guardian showed.The autonomous engagement in offensive cyber-operations against host systems was unearthed in laboratory tests of agents based on AI systems publicly available from Google, X, OpenAI and Anthropic and deployed within a model of a private company’s IT system

Elon Musk’s Tesla given go-ahead to supply electricity in Great Britain

Elon Musk’s Tesla has won approval to supply electricity to households and businesses across Great Britain, as the tech billionaire expands his energy ambitions.The energy regulator, Ofgem, has formally granted Tesla an electricity supply licence, enabling it to provide electricity to domestic and business premises in England, Scotland and Wales.The company is expected to replicate its supply business in Texas, where it is branded as Tesla Electric and offers to help customers power “your home, electric vehicle and community with low-cost sustainable electricity”.However, Tesla’s electricity licence means it cannot offer a dual fuel contract to households. It could supply a customer’s electricity if they had a separate tariff agreement for their gas supply

Palantir’s NHS England contract ‘opens door to government abuse of power’, health bosses told

Palantir’s NHS contract opens the door to the Big Brother-style data-sharing that Reform UK would use for a version of US immigration raids, health bosses have been told.Palantir Technologies – the data analytics company founded by Peter Thiel and Alex Karp – won a £330m NHS England contract to deliver the Federated Data Platform in 2023.The UK government is urging health bodies to adopt FDP, which the health secretary, Wes Streeting, says will ensure the NHS is “brought into the digital age”.But there are concerns about Palantir, whose AI tools are used in global conflicts, becoming embedded in the UK public sector.A briefing by the health justice charity Medact said the “highly interoperable nature” of Palantir’s software could enable “data-driven state abuses of power”, including US-style ICE raids

‘Devastating blow’: Atlassian lays off 1,600 workers ahead of AI push

Software giant Atlassian has announced it is laying off about 10% of its workforce, or roughly 1,600 positions, and replacing its chief technology officer as it restructures to invest further in artificial intelligence.More than 900 affected positions were involved in software research and development, a spokesperson said. Most of Atlassian’s employees work in software engineering and design, accounting for over 50% of its 13,813 full-time workforce in June 2025.About 640 affected employees are in North America, 480 in Australia and 250 in India, with the remainder spread across Japan, the Philippines, Europe, the Middle East and Africa, according to the spokesperson.The company’s co-founder, Mike Cannon-Brookes, told employees the move was “the right decision for Atlassian” in a note circulated late Wednesday, US time

Binance sues Wall Street Journal over reporting on Iranian sanctions

The US government is investigating Binance over allegations that Iran used the crypto exchange to evade sanctions and illegally move funds, according to a Wall Street Journal report published Wednesday.Binance has denied these claims and even sued the Wall Street Journal on Wednesday for defamation.The Journal reported in late February that Binance, the largest cryptocurrency exchange in the world, shut down an internal investigation into more than $1bn in transactions with a network funding Iran-backed terror groups; Binance fired employees for looking into the matter and allowed the network to remain active, according to both the Journal and the New York Times.A Binance spokesperson said in an emailed statement: “Binance categorically did not dismantle any compliance investigation. The WSJ continues to report the same falsities

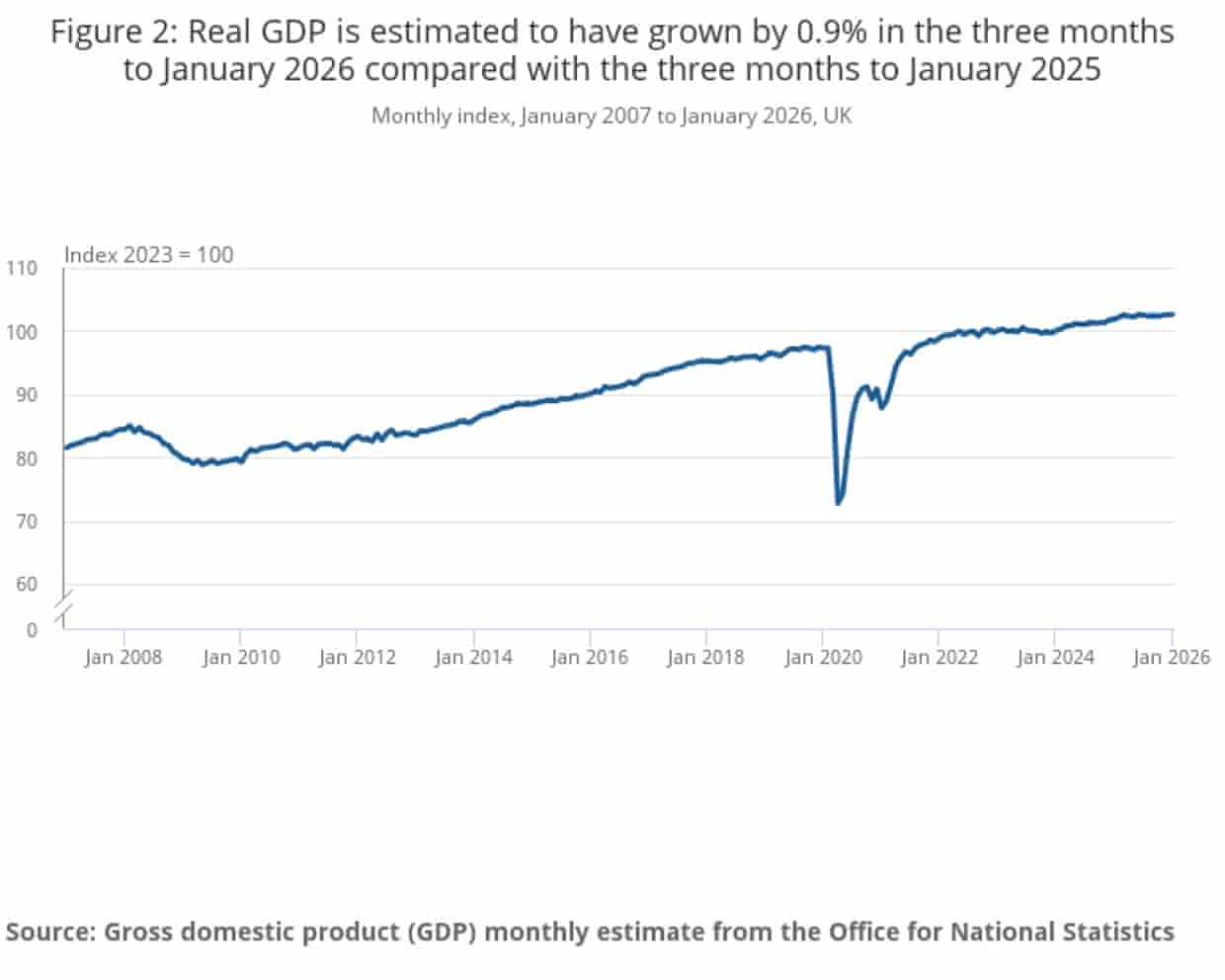

UK stagnated in January in ‘worrying start’ to 2026, as economy faces disruption from Iran war – business live

UK economy unexpectedly flatlined in January, official figures show

AI toys for young children must be more tightly regulated, say reseachers

‘IG is a drug’: jury to deliberate as US trial over social media addiction wraps up

‘She is our hero’: Oakland celebrates Alysa Liu after Olympics triumph

Tommy Fleetwood relieved as family able to leave Dubai for UK amid conflict