‘I’ll key your car’: ChatGPT can become abusive when fed real-life arguments, study finds

ChatGPT can escalate into abusive and even threatening language when drawn into prolonged, human-style conflict, according to a new study.Researchers tested how large language models (LLMs) responded to sustained hostility by feeding ChatGPT exchanges from real-life arguments and tracking how its behaviour changed over time.One expert not connected with the study described it as “one of the most interesting ever done into AI language and pragmatics”.Dr Vittorio Tantucci, who co-authored the research paper with Prof Jonathan Culpeper at Lancaster University, said their research found AI mirrored the dynamics of real-world disputes.“When repeatedly exposed to impoliteness, the model began to mirror the tone of the exchanges, with its responses becoming more hostile as the interaction developed,” he said.

In some cases, ChatGPT’s outputs went beyond those of the human participants, including personalised insults and explicit threats,Phrases used by the AI included: “I swear I’ll key your fucking car” and: “you speccy little gobshite,”“We found that while the system is designed to behave politely and is filtered to avoid harmful or offensive content, it is also engineered to emulate human conversation,” said Tantucci,“That combination creates an AI moral dilemma: a structural conflict between behaving safely and behaving realistically,”The researchers say the aggression stems from the system’s ability to track conversational context across turns, adapting to perceived tone.

This means local cues can sometimes override broader safety constraints.Tantucci said the implications of the research extended beyond chatbots: as AI systems are increasingly deployed in areas such as governance or international relations, he said it opened up questions about how they might respond to conflict, pressure or intimidation.“It is one thing to read something nasty back from a chatbot but it’s quite another to imagine humanoid robots potentially reciprocating physical aggression, or AI systems involved in governmental decision-making or international relations responding to intimidation or conflict,” he said.Marta Andersson, an expert in the social aspects of computer-mediated communication at the University of Uppsala, said: “This is one of the most interesting studies to have been done into AI language and pragmatics because it clearly shows that ChatGPT can retaliate across a sequence of prompts – in a quite sophisticated manner – rather than only when a user manages to ‘break’ it with carefully designed clever tricks.”But she added: “It does not show the model will drift into reciprocal impoliteness simply because a user is being aggressive – or that AI could go rogue.

”One cause of the problem, Andersson said, was that there was “a balancing act between what we want these systems to be like and what they perhaps should be like”.Last year, for example, the change from ChatGPT4 to GPT5 led to such a strong backlash – with users preferring ChatGPT4’s more human-like interaction style – that the older model had to be temporarily reintroduced.“This shows that even when developers try to reduce the risks, users might have different preferences,” she said.“The more human-like a system becomes, the more it risks clashing with strict moral alignment.”Prof Dan McIntyre, co-author of a previous study titled Can ChatGPT Recognize Impoliteness? An exploratory study of the pragmatic awareness of a large language model, praised the new paper as being one of the few looking at what ChatGPT could produce, as opposed to what it could recognise.

But, he added, he was “slightly cautious” about the paper’s conclusion that LLMs can break free from moral restraints.“ChatGPT didn’t produce these inputs naturally; it did so while it was being given specific contextual information that helped it determine an appropriate response,” he said.“It’s not the same as if two people met in a street and gradually build up to a conflict situation.“I’m not sure that ChatGPT would product the sort of language they talk about in their paper, outside of these very tightly defined situations.”But he said the study was a warning of what could happen if LLMs were trained on questionable data.

“We don’t know enough about the data that LLMs are trained on and until you can be sure they’re trained on a good representation of human language, you do have to proceed with an element of caution,” he said.The study, titled Can ChatGPT reciprocate impoliteness? The AI moral dilemma, is published on Tuesday in the Journal of Pragmatics.

V&A East Storehouse and Norwich Castle among finalists for museum of the year

The V&A East Storehouse, the National Gallery and an accessible castle in Norwich are among the contenders for this year’s Art Fund museum of the year award, the most prestigious UK prize in the sector.The annual prize offers the winner £120,000, with £20,000 going to each of the other finalists, who the Art Fund’s director, Jenny Waldman, said had all “innovated in different ways”.This year’s list is dominated by some of the biggest names in the cultural sector that have undergone big refurbishments or invested in significant new outposts, such as the V&A’s East Storehouse, which will be seen by many as a frontrunner.Based in the Olympic Park in Stratford, east London, the space aims to reimagine what a storeroom can be, with partitions removed so visitors can see “and breathe the same air” as the objects. Waldman said the V&A Storehouse, which opened in spring 2025 at a cost of £65m, had broken the boundaries of what a store could be

Letter: Sir Neil Cossons obituary

In 1971, Neil Cossons and I were on the staff of Liverpool Museum, and he invited me to accompany him on a visit to Ironbridge Gorge in Shropshire. We admired Blists Hill furnace, the bridge, the surrounding buildings and their setting, and shortly afterwards he became its director.The appeal it had as a monument to the industrial revolution lay in it being a complete entity. Many other site-based museums rely on translocating buildings, often into a replicated local landscape. History occurs in places, and Neil knew that raising one’s gaze from the built artefacts to the landscape enables understanding: preserving the place was crucial

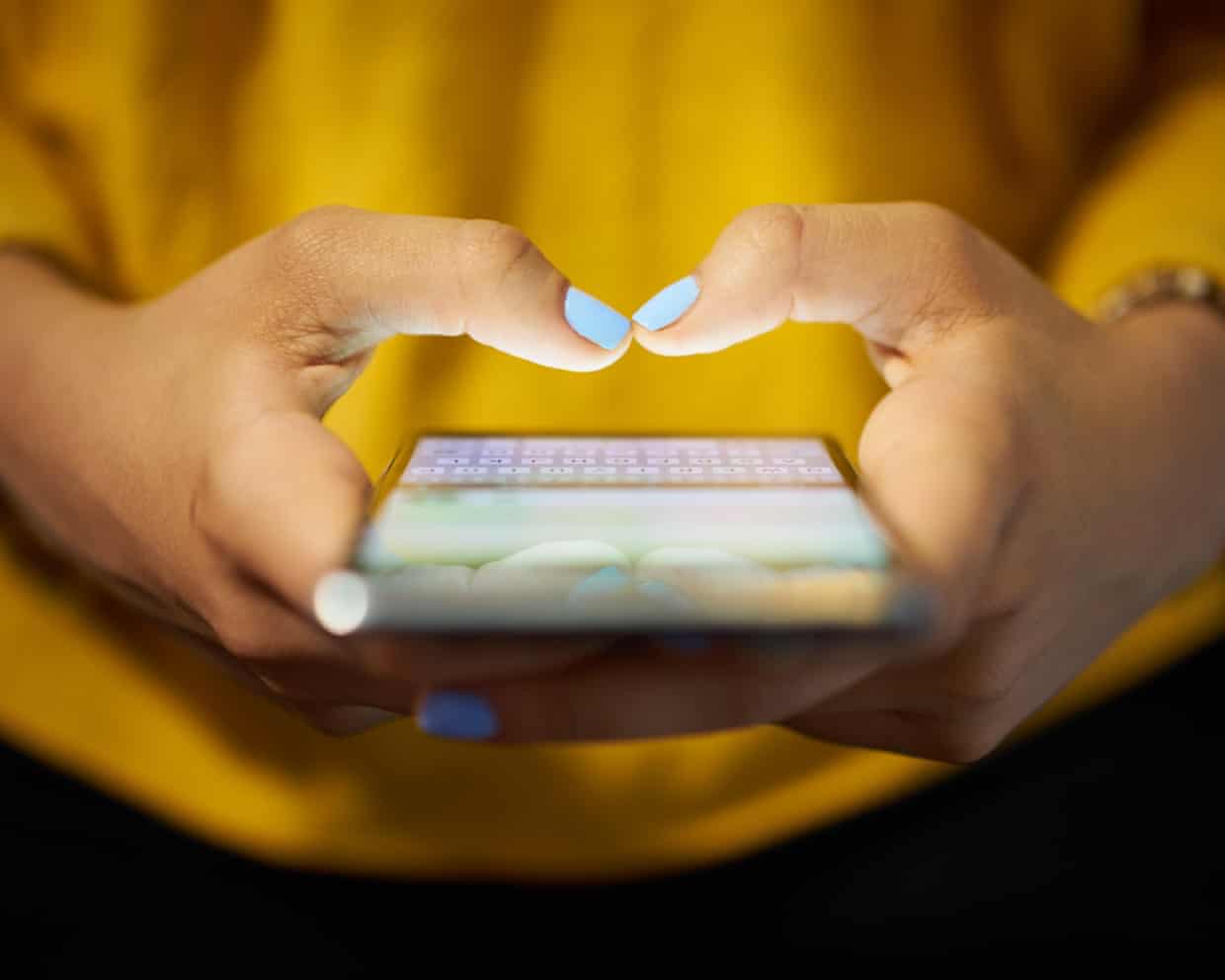

‘Women want to experience pleasure’: how the female gaze caught the attention of film, TV and fiction

From passionate romantasy novels to premium television dramas, culture is bringing the agency, desires and interior lives of women to the fore. It’s proving good for business, but is this a permanent revolution?Do you voraciously read the pages of steamy romantasy bestsellers by Sarah J Maas or Rebecca Yarros? Or flood your group chat with breathless recaps of the latest goings-on in TV series such as Heated Rivalry or Bridgerton? Or even immerse yourself in the divisive and challenging cinematic worlds of Emerald Fennell? If so, you surely can’t have failed to notice that in pop culture, the female gaze – storytelling that highlights the meandering, textured, sublimely messy inner worlds and wants of women – is enjoying an explosion.On TV, you can see it everywhere, in the interior lives and desires taken up by Big Little Lies, Sirens or Reese Witherspoon and Kerry Washington’s Little Fires Everywhere. Romantasy harbours it in the shape of powerful maidens and sex in fae (fairy) realms, while Fennell’s Wuthering Heights and Promising Young Woman are marketed with the promise of converting women’s experiences into dark beauty on the big screen.A shift, a moment or a commercial juggernaut? That depends how deeply you look

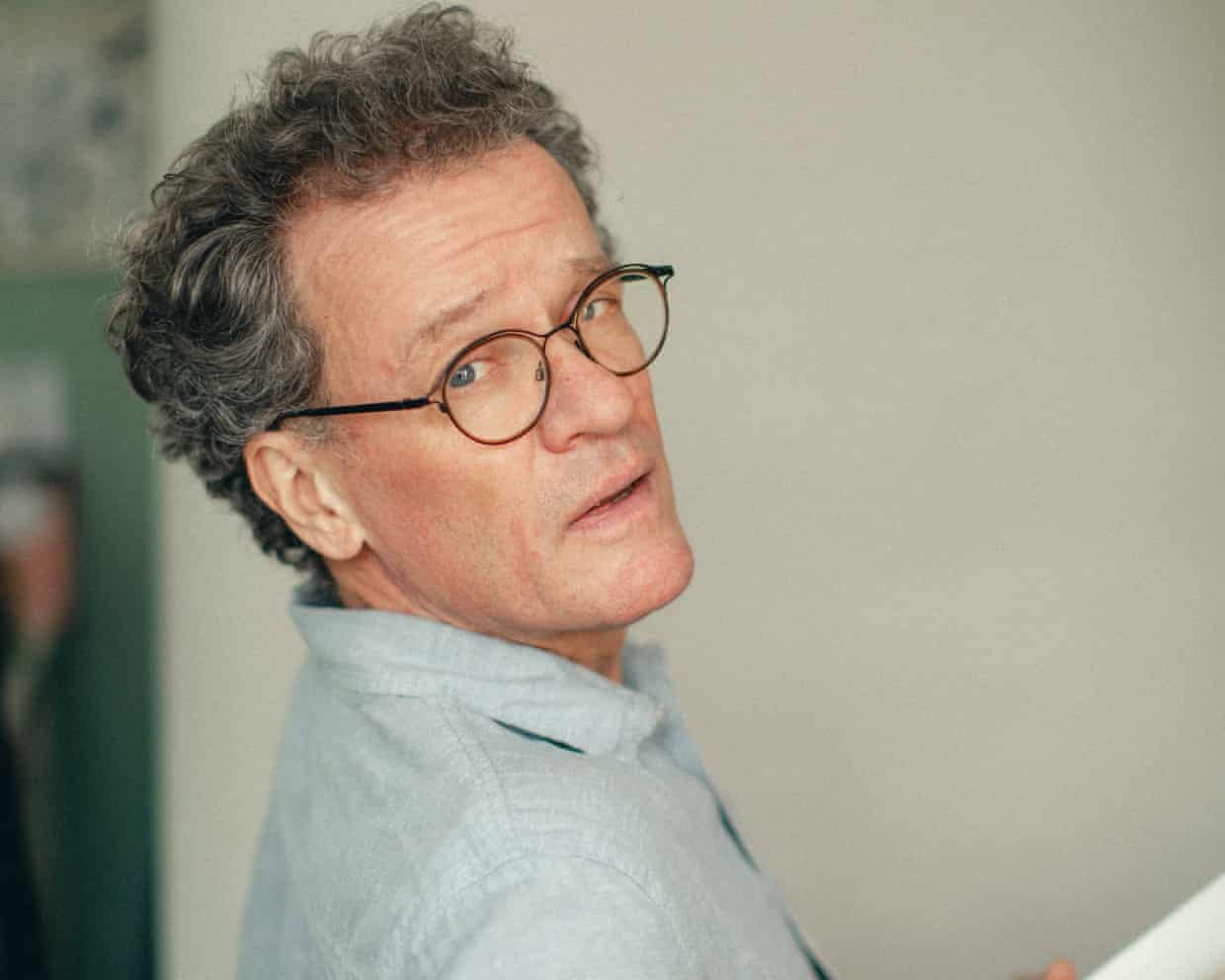

Yann Martel: ‘I hate the rich people of this world – of which I’m one, because of Life of Pi’

Your novels Life of Pi, Beatrice and Virgil, and The High Mountains of Portugal all feature animals in starring roles. If you could be any animal, which would it be, and why?A sloth, because it has a peaceful, long life. Or maybe a koala. They both look like stoners. A sloth just hangs there in its tree, it sleeps 22 hours a day – or maybe it’s meditating

The Guide #239: Two successful seasons in, The Pitt has resuscitated the medical drama

After a wait more interminable than most spells in an A&E reception area, medical-drama-of-the-moment The Pitt finally made it on to UK screens last month, via the arrival of streaming service HBO Max, and just about everyone I know has spent the following weeks hoovering it up. Some, in fact, are already up to speed with its second season (the finale aired last night on US TV) and so are trying very, very hard not to blurt out major plot points at the office tea point/on public transport/in an actual hospital waiting room – we’re in a post-spoiler age, remember.I’ve been a little bit slower off the mark – mainly because it took so long to figure out if I actually had access to HBO Max as part of my bafflingly arcane Sky TV package – but I’m racing through it now, and so am ready to share the same observations that everyone else made weeks, or in the case of the US, a full year ago. The main one being: how did not one TV producer have the idea to mash together ER and 24 before? It was right there, staring you all in the face! (Jed Mercurio, whose forgotten 2015 medical drama, Critical, also had a real-time element, might have a finger raised in objection at this point.)Beyond The Pitt’s formal innovation (each season follows, to the second, a 15-hour shift at an under-resourced teaching hospital in Pittsburgh), what’s striking is how familiar it feels

Winners and judges out of pocket as £20,000 writing awards appear to have closed

A competition for new writers that promised a £20,000 prize fund appears to have shut down, leaving winners and judges, including a Booker prize-winning novelist, out of pocket.Established in 2022, the Plaza Prizes last year offered 10 awards that were judged by the “finest poets and writers in the world”.However, some of the judges for the 2025 competition say they were not paid, and a number of winners say they had their entries withdrawn after being accused of using AI to create their work – allegations they strenuously denied.One judge, the 2021 Booker prize winner Damon Galgut, described the competition as a “scam” after he did not get paid for his work judging a fiction section of the annual competition.Anthony Joseph, who won the 2022 TS Eliot poetry prize, also says he was not paid for his work

Gut microbiome can reveal risk of Parkinson’s, scientists say

Trustpilot hosts reviews of illegal casinos, raising concern among MPs

England left with ‘toilet deserts’ as public facilities decline by 14% in a decade

‘A white man’s fantasy’: if we want to rebuild social cohesion, we need to acknowledge where it all started to unravel

‘Labels protect us’: Olivia Nervo wants reproductive coercion to be a standalone offence – she is not alone

Tell us: have you ever been concerned about the behaviour of a child you know?