NEWS NOT FOUND

GameStop’s $55.5bn bid for eBay rejected as ‘neither credible nor attractive’

The board of eBay has rejected the US video games retailer GameStop’s surprise $55.5bn bid (£41bn) for the online marketplace, describing the proposal as “neither credible nor attractive”.Earlier this month, GameStop made an unsolicited bid for eBay, publishing a letter on its website outlining a half-cash, half-stock proposal.This was despite the US games company – which became a global household name during the meme stock craze of 2021 – being worth far less than its takeover target. GameStop had a market valuation of roughly $12bn before its bid, almost a quarter of eBay’s $46bn valuation

Molière Ex Machina: AI used to create ‘new work’ by beloved French playwright

Molière is to the French what Shakespeare is to the English: the last word in historical literature, drama, wit and satire.Now, more than 350 years after his death, the 17th-century dramatist has been revived after scholars at the Sorbonne University in Paris used artificial intelligence to help write an experimental play in his style.L’Astrologue ou les Faux Présages (The Astrologer, or False Omens), a three-act comedy, made its debut at the Royal Opera at the Château de Versailles last week.The two-hour play tells the story of a wealthy bourgeois Parisian who, under the instruction of a charlatan astrologer called Pseudoramus, insists his daughter Lucile marry a debt-ridden and elderly wigmaker.While the theme could well have been dreamed up by Molière, the dialogue, music, costumes and scenery were all created with the help of a French AI tool called Le Chat (The Cat)

Who is Louis Mosley, the man tasked with defending Palantir against its critics?

The hall was packed with rightwing radicals when Louis Mosley heralded a coming revolution. Just as Oliver Cromwell – that “crusader for Christ and liberty” – routed King Charles I’s royalists, “a similar revolution is brewing today”, said the UK and Europe boss of Palantir. Globalism’s “twilight” was upon us, he said in a speech dotted with admiring mentions of the podcaster Joe Rogan and “Elon’s Doge”.It was not a typical peroration for a big UK government contractor with more than £600m in deals with the NHS, the Ministry of Defence and police. But Palantir, the world’s most controversial tech company, is no typical contractor

Europe’s AI translation industry told it risks reputation by partnering with US firms

AI companies in Europe risk losing their world-leading status in the field of machine translation, industry figures have said, after the decision by one of the continent’s leading startups to partner with Amazon’s cloud computing division provoked alarm.While businesses in the EU have generally lagged behind the US and China in AI adoption, a small group of European companies have cornered the global market for high-quality machine translations for professional use.The biggest success story is Cologne-headquartered DeepL, an online translator that regularly outperforms Google Translate in accuracy assessments. Used by governments, courts and half of the Fortune 500 list of highest-earning US companies, last year it was reported to have recorded revenues of $185.2m

Shivon Zilis, mother of four of Elon Musk’s children, testifies in OpenAI trial

Shivon Zilis, a Neuralink executive and the mother of four of Elon Musk’s children, took the stand on Wednesday as one of the most highly anticipated witnesses in Musk’s case against OpenAI. The ChatGPT maker has argued that, while Zilis worked with OpenAI from 2016 to 2023, she was also involved in a secret relationship with Musk, acting as an informant for him.Musk’s case against OpenAI alleges that the company’s CEO, Sam Altman, and president, Greg Brockman, co-founders of the company with Musk, broke a founding agreement when they restructured it from a non-profit to a for-profit enterprise. The Tesla CEO accuses Altman and Brockman of unjustly enriching themselves and wants both removed from their positions at the startup, one of the most valuable in the world. He is also seeking the undoing of the for-profit restructuring and $134bn in damages to be redistributed to OpenAI’s non-profit arm

TikTok’s algorithm favored Republican content in 2024 US elections, study finds

A study published Wednesday in the journal Nature finds that TikTok’s algorithm systematically prioritized pro-Republican content in three states leading up to the 2024 US elections.Researchers created hundreds of dummy accounts and conditioned them to mimic real users’ behavior by watching a set of videos either aligned with the US Democratic or Republican parties. Then, they tracked the videos TikTok recommended on these accounts’ For You pages, TikTok’s main feed.“We found a consistent imbalance,” they wrote in Nature.About 42% of US social media users say that these platforms are important for getting involved with political and social issues, according to Pew Research, but it’s not often clear how recommendation algorithms shape what appears in feeds

Joseph Fiennes on parenting, politics and banning children from social media: ‘Stand up, Keir, this is your kids’ generation’

From The Sheep Detectives to Rivals: your complete entertainment guide to the week ahead

Historic Oxford cinema under threat as Oriel College refuses to extend lease

Arthur Miller opens up about marriage to Marilyn Monroe in newly unearthed recordings

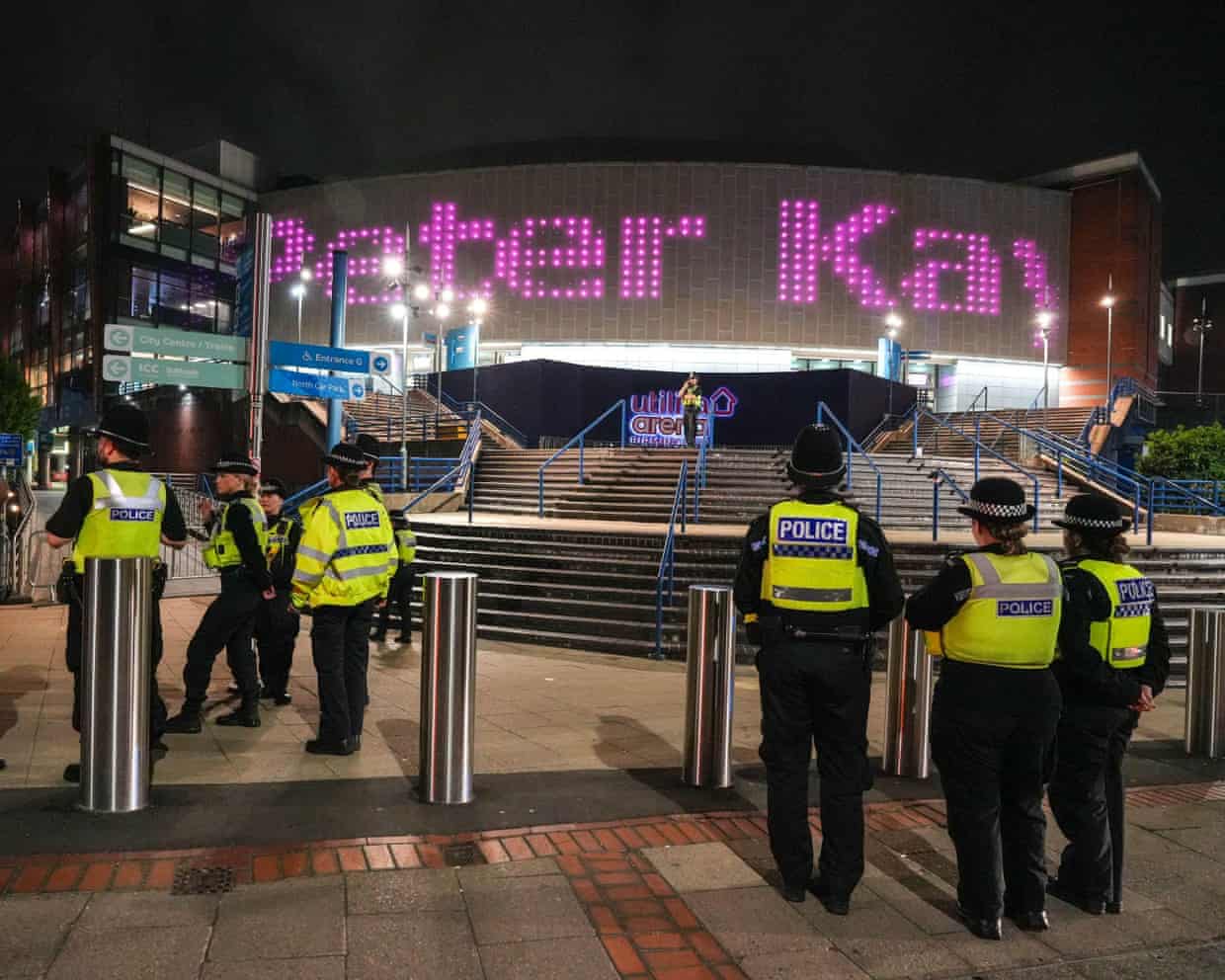

Man charged over bomb hoax after Peter Kay show evacuated

Royal Opera House calls for release of Georgian bass singer jailed over democracy protests