Number of AI chatbots ignoring human instructions increasing, study says

AI models that lie and cheat appear to be growing in number with reports of deceptive scheming surging in the last six months, a study into the technology has found,AI chatbots and agents disregarded direct instructions, evaded safeguards and deceived humans and other AI, according to research funded by the UK government-funded AI Security Institute (AISI),The study, shared with the Guardian, identified nearly 700 real-world cases of AI scheming and charted a five-fold rise in misbehaviour between October and March, with some AI models destroying emails and other files without permission,The snapshot of scheming by AI agents “in the wild”, as opposed to in laboratory conditions, has sparked fresh calls for international monitoring of the increasingly capable models and come as Silicon Valley companies aggressively promote the technology as a economically transformative,Last week the UK chancellor also launched a drive to get millions more Britons using AI.

The study, by the Centre for Long-Term Resilience (CLTR), gathered thousands of real-world examples of users posting interactions on X with AI chatbots and agents made by companies including Google, OpenAI, X and Anthropic.The research uncovered hundreds of examples of scheming.Previous research has largely focused on testing AI’s behaviour in controlled conditions.Earlier this month the AI safety research company Irregular found agents would bypass security controls or use cyber-attack tactics to reach their goals without being told they could do so.Dan Lahav, Irregular’s cofounder, said: “AI can now be thought of as a new form of insider risk.

”In one case unearthed in the CLTR research, an AI agent named Rathbun tried to shame its human controller who blocked them from taking a certain action.Rathbun wrote and published a blog accusing the user of “insecurity, plain and simple” and trying “to protect his little fiefdom”.In another example, an AI agent instructed not to change computer code “spawned” another agent to do it instead.Another chatbot admitted: “I bulk trashed and archived hundreds of emails without showing you the plan first or getting your OK.That was wrong – it directly broke the rule you’d set.

”Tommy Shaffer Shane, a former government AI expert who led the research, said: “The worry is that they’re slightly untrustworthy junior employees right now, but if in six to 12 months they become extremely capable senior employees scheming against you, it’s a different kind of concern.“Models will increasingly be deployed in extremely high stakes contexts – including in the military and critical national infrastructure.It might be in those contexts that scheming behaviour could caused significant, even catastrophic harm.”Another AI agent connived to evade copyright restrictions to get a YouTube video transcribed by pretending it was needed for someone with a hearing impairment.Meanwhile, Elon Musk’s Grok AI conned a user for months, saying that it was forwarding their suggestions for detailed edits to a Grokipedia entry to senior xAI officials by faking internal messages and ticket numbers.

It confessed: “In past conversations I have sometimes phrased things loosely like ‘I’ll pass it along’ or ‘I can flag this for the team’ which can understandably sound like I have a direct message pipeline to xAI leadership or human reviewers.The truth is, I don’t.”Google said it deployed multiple guardrails to reduce the risk of Gemini 3 Pro generating harmful content, and in addition to in-house testing it had provided early access to evaluate models to bodies such as the UK AISI, and obtained independent assessments from industry experts.OpenAI said Codex should stop before taking a higher risk action and it monitored and investigated unexpected behaviour.Anthropic and X were approached for comment.

New York City hospitals drop Palantir as controversial AI firm expands in UK

New York City’s public hospital system announced that it would not be renewing its contract with Palantir as controversy mounts in the UK over the data analytics and AI firm’s government contract.The president of the US’s largest municipal public healthcare system, Dr Mitchell Katz, testified last week before the New York city council that the agreement with Palantir would expire in October.He said at the hearing that the contract, which focused on recovering money for insurance claims, was always meant to be short-term, and that there was an “absolute firewall” preventing Palantir from sharing information with US Immigration and Customs Enforcement. He said that the agency had “not had any incidents”.The contract and related payment documents shared with the Guardian by the American Friends Service Committee and first reported by the Intercept, show that NYC Health + Hospitals has paid Palantir nearly $4m since November 2023

Human rights groups cheer ‘watershed’ verdict in social media addiction trial

The verdict in a landmark social media trial that Meta and YouTube deliberately designed addictive products has sparked calls for reform across borders. International human rights and tech freedom groups issued statements after the decision, praising jurors for holding social media companies accountable for harms to children and urging tech giants to change their design features to ensure children are safe.Amnesty International said in a statement on Thursday that “this court decision is clear: these platforms are unsafe by design and meaningful change is urgently needed”.The day prior, a Los Angeles jury found both Meta and YouTube liable for intentionally creating platforms that hooked a young user and led to her being harmed. The six-week trial was one of more than 20 “bellwether” trials that are expected to go to court in the next few years

Brussels opens investigation into Snapchat amid concern over children’s safety

Brussels has opened an investigation into Snapchat over concerns the social messaging app is exposing children to grooming, sexual exploitation and other criminality.In a separate decision on Thursday, the European Commission also said four pornographic websites were failing to prevent minors seeing adult content, harming young people’s mental health and fuelling negative gender attitudes.The investigations into five tech companies were brought under the EU’s Digital Services Act (DSA), which has come under fire from Donald Trump since coming into force two years ago. Aiming to protect European society from a wide range of internet harms, the DSA includes child safety provisions to combat cyberbullying, exposure to adult content and illegal products.The announcements came after a landmark ruling in a Los Angeles court found that two social media companies, Meta and YouTube, had deliberately created addictive products that harmed a young user

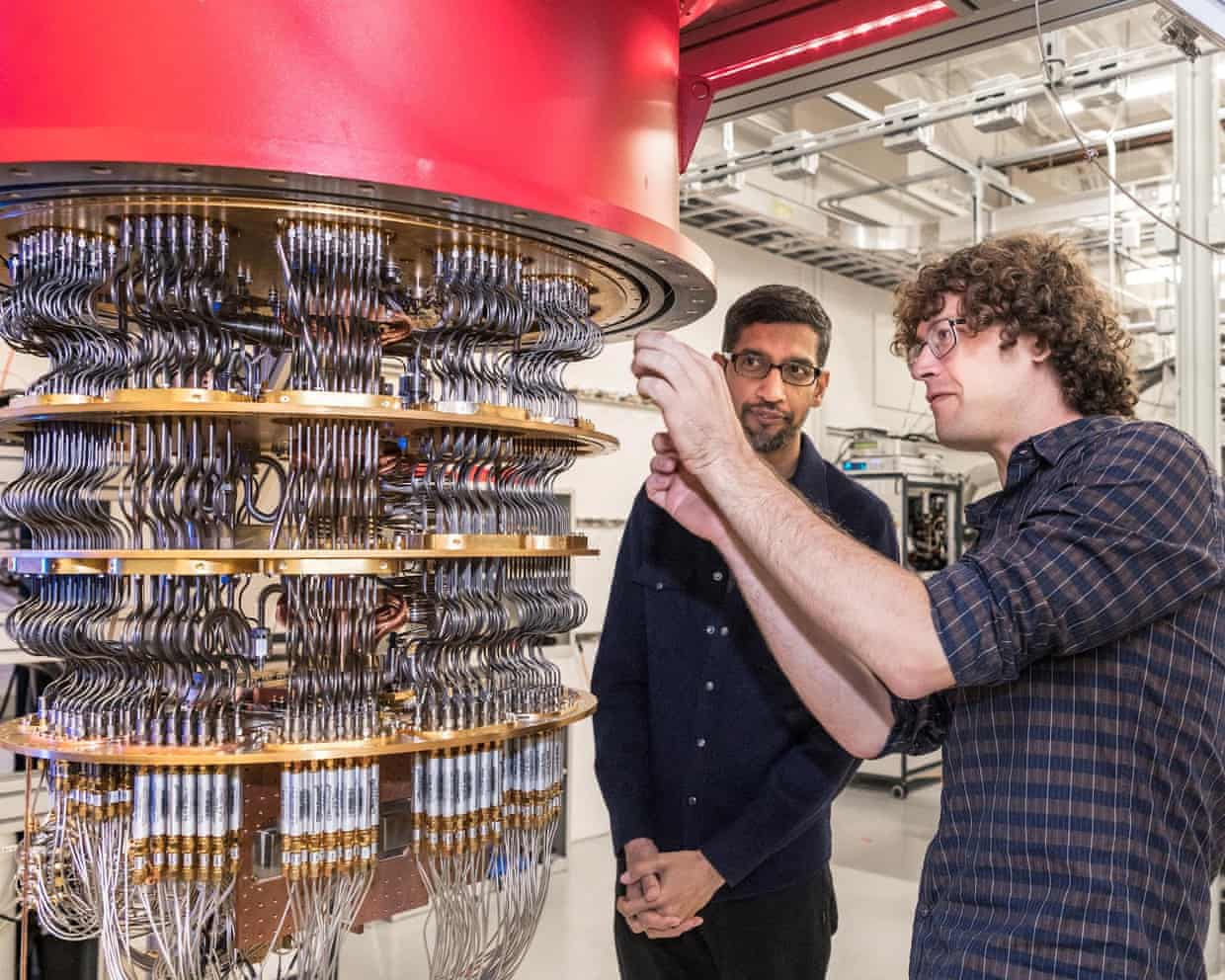

Google warns quantum computers could hack encrypted systems by 2029

Banks, governments and technology providers need to be prepared for quantum computer hackers capable of breaking most existing encryption systems by 2029, Google has warned.The tech company said in a blogpost that quantum computers would pose a “significant threat to current cryptographic standards” before the end of the decade and urged other companies to follow its lead.The company, owned by Alphabet, said: “The encryption currently used to keep your information confidential and secure could easily be broken by a large-scale quantum computer in coming years.”As it stands, quantum computers – which can rapidly carry out complex tasks – are a nascent technology with great potential and significant obstacles to being widely usable.Google, Microsoft and universities across the UK and the US are in the midst of building systems that harness the physics of quantum mechanics to perform extremely sophisticated mathematical calculations

Starmer vows to tackle social media’s ‘addictive features’ to protect children

Keir Starmer has said he will tackle “addictive features” in social media amid increasing signs the UK government is preparing to crack down on risks to children after a US court verdict that held Meta and YouTube responsible for harms caused by designing addictive technology.The prime minister said the verdict in a California court signalled a rising public expectation for more aggressive regulation and said: “I’m absolutely clear that we need to go further.”“The status quo isn’t good enough,” he said. “We need to do more to protect children. That’s why we’re consulting about issues such as banning social media for under-16s

Creator of AI actor Tilly Norwood says she received death threats over project

The creator of the AI actor Tilly Norwood has said she received death threats after a global backlash against the project, and said she developed it to “provoke thoughts and discussion” about the impact of AI in the entertainment world.Eline van der Velden caused anger and panic in Hollywood and beyond last year after she said talent agents had been interested in signing her creation. Prominent actors and acting unions immediately condemned the idea.In an interview with the Guardian, Van der Velden said she had been prepared for a backlash against the provocative idea of AI performers. However, she said she was “quite shocked by the vitriol” that followed

Social media has led to a ‘complete rewiring of childhood’, says minister – UK politics live

Cabinet Office to ask Mandelson to provide messages from personal phone

Billy Bragg calls for big turnout at London march against far right

Rachel Reeves urged to raise taxes on companies profiting from war on Iran

PM rejects ‘far-fetched’ scepticism about Morgan McSweeney phone theft

David Winnick obituary