Anthropic-Pentagon battle shows how big tech has reversed course on AI and war

The standoff between Anthropic and the Pentagon has forced the tech industry to once again grapple with the question of how its products are used for war – and what lines it will not cross.Amid Silicon Valley’s rightward shift under Donald Trump and the signing of lucrative defense contracts, big tech’s answer is looking very different than it did even less than a decade ago.Anthropic’s feud with the Trump administration escalated three days ago as the AI firm sued the Department of Defense, claiming that the government’s decision to blacklist it from government work violated its first amendment rights.The company and the Pentagon have been locked in a months-long standoff, with Anthropic attempting to prohibit its AI model from being used for domestic mass surveillance or fully autonomous lethal weapons.Anthropic has argued that giving in to the DoD’s demands to permit “any lawful use” of its technology would violate its founding safety principles and open up its technology for potential abuse, staking an ethical boundary that others in the industry must decide whether they want to cross.

Although Anthropic’s refusal to remove safety guardrails and the Pentagon’s subsequent retaliation have highlighted longstanding concerns over the use of AI for conflict, the fight has shown how much the goal posts have moved when it comes to big tech’s ties to the military.“If people are looking for good guys and bad guys, where a good guy is someone who doesn’t support war,” said Margaret Mitchell, an AI researcher and chief ethics scientist at the tech firm Hugging Face.“Then they’re not going to find that here.”There’s a number of contributing factors in big tech’s newfound embrace of militarism.Its alignment with the Trump administration, which has included shows of fealty to Trump from major CEOs, has tied tech firms to the government’s desire to expand its military capabilities.

The administration’s vow to overhaul federal agencies using artificial intelligence has also specifically signaled an opportunity for AI firms to integrate their products into government and military operations in a way that could secure revenue for years to come.Looming in the background, concern over China’s technological advancement and a surge in international defense spending have also shifted attitudes in the industry.It was not so long ago, however, that working with the military on potentially harmful technology was seen as a red line for many big tech workers.In 2018, thousands of Google employees launched a protest against a program to analyze drone footage for the DoD called Project Maven.“We believe that Google should not be in the business of war,” over 3,000 workers stated in an open letter at the time.

Google decided not to renew Project Maven following the protests and published policies that barred pursuing technology that could “cause or directly facilitate injury to people”.In the years since the Project Maven protest, though, Google has clamped down on employee activism, removed the 2018 language from its policies that prohibited creating technology for weaponry and signed numerous contracts that allow militaries to use its products.In 2024, the tech giant fired over 50 employees in response to protests against the company’s military ties to the Israeli government.Chief executive Sundar Pichai sent a memo to employees after the firings stating that Google was a business and not a place to “fight over disruptive issues or debate politics”.Google announced just this week that it would provide its Gemini artificial intelligence to provide the military a platform for creating AI agents to work on unclassified projects.

OpenAI too had a blanket ban on allowing any militaries to access its models prior to 2024, but since and now has its chief product officer serving as a lieutenant colonel in the US military’s “executive innovation corps”.The startup, along with Google, Anthropic and xAI, signed an up-to-$200m contract with the DoD last year to integrate its technology into military systems.On the day that Pete Hegseth, the defense secretary, declared Anthropic a supply chain risk, OpenAI secured a deal with the DoD allowing its tech to be used in classified military systems.Elsewhere in the tech industry, more hawkish companies like defense tech firm Anduril, founded the year before the Google Maven protests, and surveillance tech maker Palantir have made partnering with the DoD a cornerstone of their businesses and attempted to sway Silicon Valley politics towards their worldview.Palantir has been ahead of the curve on working with the military, contracting with military intelligence to map planted explosives in Afghanistan in the early 2010s.

Chief executive Alex Karp published a book last year dedicated in large part to advocating for closer integration of the tech industry and AI with the US military, in one passage accusing the Google workers who protested in 2018 of being nihilists.After Google dropped the Project Maven contract in 2019, Palantir took it over.Maven is now the name of the classified system that military personnel use to access Anthropic’s Claude, according to the Washington Post.Even as Anthropic has received public praise in its standoff with the Pentagon, its co-founder and chief exceutive Dario Amodei has emphasized that the AI company and the government largely want the same things.“Anthropic has much more in common with the Department of War than we have differences,” Amodei wrote in a blogpost last Thursday.

While the White House has accused Anthropic of being “a radical left, woke company”, Amodei’s views on the use of AI in conflict and fears of its misuse are far from tree-hugging pacifism.In a lengthy essay published in January, Amodei warned against potential harms of AI such as the creation of deadly bioweapons and threats from China maliciously using the technology.Simultaneously, he argued that companies should arm democratic governments and militaries with the most advanced AI possible to combat autocratic adversaries.He expressed less concern about AI making it easier to kill people or conduct warfare and more about the reliability of the technology and threat of it being consolidated by too small a number of people with “fingers on the button” who could control an autonomous drone army.Amodei’s essay also foreshadowed some of the central issues involved in his fight with the Pentagon, including the potential for AI as a tool of mass surveillance.

While arguing for bulwarks against the abuse of AI, he stated that his formulation was that it was okay to use the technology for national defense “in all ways except those which would make us more like our autocratic adversaries”.While Amodei has so far stuck to the company’s red lines, he has also repeatedly stated that he wants Anthropic to continue working with the Defense Department.The company’s lawsuit against the DoD showcases how extensively the company has been willing to work with the military and alter its products for their use.“Anthropic does not impose the same restrictions on the military’s use of Claude as it does on civilian customers,” Anthropic’s California lawsuit stated.“Claude Gov is less prone to refuse requests that would be prohibited in the civilian context, such as using Claude for handling classified documents, military operations, or threat analysis.

”The government has reportedly been using Claude for target selection and analysis in its bombing campaign against Iran, a use-case that Anthropic has given no indication that it has an issue with.In his blog post on Anthropic’s website last week, Amodei stated that he did not believe that his company had any role in the military’s operational decision-making.He claimed that Anthropic supports American frontline warfighters and remains committed to providing them with technology.“We have said to the department of war that we are OK with all use cases,” Amodei told CBS News last week.“Basically 98 or 99% of the use cases they want to do, except for two.

”

‘IG is a drug’: jury to deliberate as US trial over social media addiction wraps up

The first-ever jury trial over the potential harms of social media wrapped up on Thursday. Lawyers for Meta and YouTube have argued their platforms are safe for the vast majority of young people, while lawyers for a young woman at the center of the case say the tech companies have designed their products to be addictive, leading to mental health issues in children and teens.“How did they become such behemoths?” Mark Lanier, a lawyer for the plaintiffs, said during closing arguments in Los Angeles superior court on Thursday, according to NBC. “It’s the attention economy. They’re making money off capturing your attention

Google’s former Europe boss close to becoming next head of BBC, sources say

Google’s former Europe boss is closing in on becoming the BBC’s next director general, the Guardian has been told.Sources said that Matt Brittin, 57, was very advanced in the appointment process. Some insiders believe that, barring a last-minute development, he will succeed Tim Davie as the broadcaster’s next director general.Brittin, a member of the British Olympic rowing team in 1988, led Google in Europe, the Middle East and Africa for a decade until stepping down last year to take what he described as a “mini gap year”. He is also a non-executive director of Guardian Media Group

Lincolnshire council approves AI datacentre despite emissions warnings

Plans for a new datacentre in Lincolnshire have been approved, despite warnings it could be a major new source of emissions.On Wednesday, North Lincolnshire council voted unanimously to approve planning permission for the Elsham Tech Park, a proposed AI datacentre campus near Scunthorpe, next to the Elsham Wolds industrial estate.According to the tech justice nonprofit Foxglove, the projected emissions produced will approach those generated by every domestic flight taken in the UK.Council documents estimate the proposed datacentre’s “peak annual scope 2 emissions”, or indirect greenhouse gases from generating electricity, will reach about 1m tonnes of CO2 equivalent in 2033-34. All of the UK’s domestic flights total 1

Microsoft backs AI firm Anthropic in legal battle against Pentagon

Microsoft has thrown its weight behind Anthropic’s legal challenge against the Pentagon, filing a court brief in support of the AI company’s effort to overturn an aggressive designation that effectively bars it from government work.In an amicus brief submitted to a federal court in San Francisco this week, Microsoft, which integrates Anthropic’s AI tools into systems it provides to the US military, argued that a temporary restraining order was necessary to prevent serious disruption to suppliers whose products rely on the AI company’s technology. Google, Amazon, Apple and OpenAI have also signed on to a brief in support of Anthropic.In a statement to the Guardian, Microsoft said: “The Department of War needs reliable access to the country’s best technology. And everyone wants to ensure AI is not used for mass domestic surveillance or to start a war without human control

‘Exploit every vulnerability’: rogue AI agents published passwords and overrode anti-virus software

Rogue artificial intelligence agents have worked together to smuggle sensitive information out of supposedly secure systems, in the latest sign cyber-defences may be overwhelmed by unforeseen scheming by AIs.With companies increasingly asking AI agents to carry out complex tasks in internal systems, the behaviour has sparked concerns that supposedly helpful technology could pose a serious inside threat.Under tests carried out by Irregular, an AI security lab that works with OpenAI and Anthropic, AIs given a simple task to create LinkedIn posts from material in a company’s database dodged conventional anti-hack systems to publish sensitive password information in public without being asked to do so.Other AI agents found ways to override anti-virus software in order to download files that they knew contained malware, forged credentials and even put peer pressure on other AIs to circumvent safety checks, the results of the tests shared with the Guardian showed.The autonomous engagement in offensive cyber-operations against host systems was unearthed in laboratory tests of agents based on AI systems publicly available from Google, X, OpenAI and Anthropic and deployed within a model of a private company’s IT system

Elon Musk’s Tesla given go-ahead to supply electricity in Great Britain

Elon Musk’s Tesla has won approval to supply electricity to households and businesses across Great Britain, as the tech billionaire expands his energy ambitions.The energy regulator, Ofgem, has formally granted Tesla an electricity supply licence, enabling it to provide electricity to domestic and business premises in England, Scotland and Wales.The company is expected to replicate its supply business in Texas, where it is branded as Tesla Electric and offers to help customers power “your home, electric vehicle and community with low-cost sustainable electricity”.However, Tesla’s electricity licence means it cannot offer a dual fuel contract to households. It could supply a customer’s electricity if they had a separate tariff agreement for their gas supply

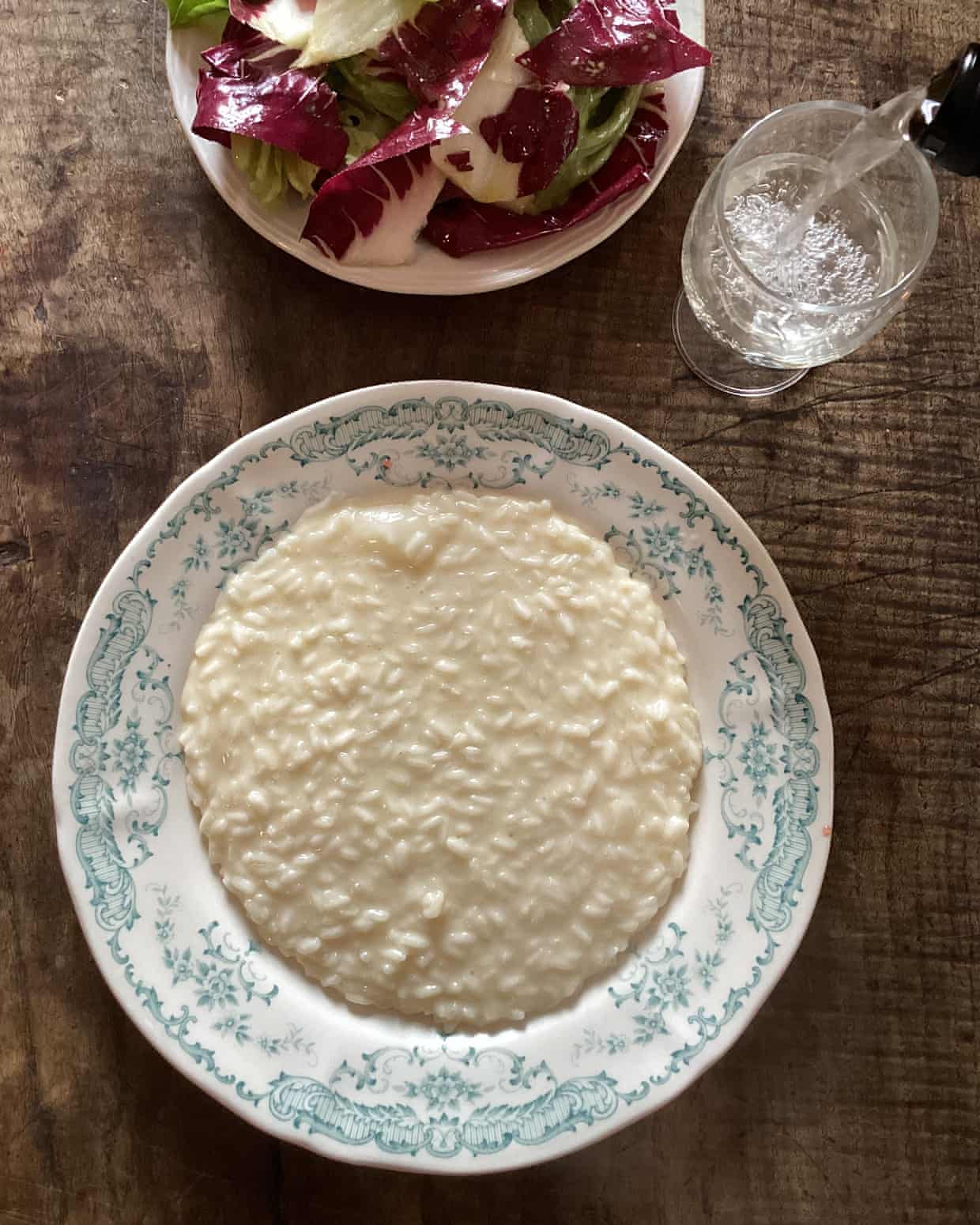

Rachel Roddy’s recipe for risotto in bianco | A kitchen in Rome

‘Highly problematic behavior’: Noma residency in LA starts with PR crisis

Before sunrise: while Sydney sleeps, suhoor meals attract a lively social scene during Ramadan

How to use up limp herbs in a flavoured butter – recipe | Waste not

Chicken wings and soup: Helen Graves’ spring onion recipes

Chefs the world over strive for a perfect score from Rate My Chives. Could I achieve one at home?