ChatGPT driving rise in reports of ‘satanic’ organised and ritual abuse, UK experts say

ChatGPT is driving a rise in reports of organised and ritual abuse, UK experts have said, as survivors of “satanic” sexual violence use the AI tool for therapy,Police say organised and ritual abuse, and “witchcraft, spirit possession and spiritual abuse” (WSPRA) against children, is under-reported in the UK,There is no modern-day charge that covers it specifically, but such offending is typified by sexual abuse, violence and neglect involving ritualistic elements – sometimes inspired by satanism, fascism or esoteric religious beliefs – to control victims,Perpetrators include abusive families and networks, human traffickers, online gangs and paedophile rings,There have been 14 UK criminal cases since 1982 in which ritualistic practices in sexual abuse were acknowledged.

However, 2025 research by clinical psychologist Dr Elly Hanson found convictions reflected the “tip of the iceberg”.Experts are now rolling out training for police forces, in a drive spearheaded by the National Police Chiefs’ Council (NPCC), which has set up a specialist working group.Gabrielle Shaw, the chief executive of the National Association for People Abused in Childhood (Napac), said there had been a “sustained rise” in reports to them of ritual abuse over the last 18 months, with an increasing number of people saying they had been led to report it by AI.Shaw said: “Over the last six months to a year, we’re getting people contacting the Napac support line saying: ‘I was referred to you by ChatGPT’.People are using AI, ChatGPT as a form of therapy and exploration.

There are mixed feelings about that, but if it’s a route into support, that has to be a good thing.“We would normally see spikes in calls around days that have significant supernatural or religious overtones – but this is not a spike – it’s a sustained rise.There’s increasing knowledge of the crime and of where you can get support … satanism does come up a fair bit.”NPCC, Napac and the Hydrant policing programme, which supports forces nationwide with child protection, commissioned a review from Hanson last year and launched a WSPRA briefing for professionals this month.Last year members of a paedophile ring in Scotland – who posed as witches and wizards – were jailed for sexual offences.

Shaw said of 36,700 calls over nine years to NAPAC, 1,310 mentioned organised ritual abuse.She said offending could be “intergenerational in nature” and while perpetrators were predominantly male, survivors named “grandmothers and aunts” as perpetrators.Richard Fewkes, Hydrant Programme’s director, said the fact ritual elements sounded “fantastical” had contributed to the justice gap.He added: “We need to improve right the way across the system in dealing with it – it’s out there, it does exist and it’s not actually being reported (to police) … we’ve known about this for many, many years.”Hanson said victims were growing up in “regimes of cruelty”, but truth was “getting lost between” a “discourse of disbelief” on one hand, and “conspiracy fictions” on the other.

She added: “We’re not seeing this abuse happening in particular cultures rather than others.This is something we’re seeing happening within white British, often privileged families.It’s not conforming to any stereotypes about where it might be.” This article was amended on 9 March 2026.An earlier version misnamed the National Association for People Abused in Childhood.

Also, references to “organised ritual abuse” have been changed to “organised and ritual abuse”, as they are considered to be discrete types of abuse by campaigners.

OpenAI delays ‘adult mode’ for ChatGPT to focus on work of higher priority

OpenAI is delaying the launch of “adult mode” for ChatGPT after admitting it had more pressing priorities than introducing erotica on its signature artificial intelligence product.The startup’s chief executive, Sam Altman, had announced last year that OpenAI would allow adult content as it rolled out age checking.However, the company has now said the plan has been delayed in favour of more immediate requirements such as improving ChatGPT’s performance.“We’re pushing out the launch of adult mode so we can focus on work that is a higher priority for more users right now, including gains in intelligence, personality improvements, personalisation, and making the experience more proactive,” said OpenAI, which has more than 900 million users of ChatGPT. “We still believe in the principle of treating adults like adults, but getting the experience right will take more time

Liverpool and Manchester United complain to X over ‘sickening’ Grok AI posts

Liverpool and Manchester United have complained to Elon Musk’s X after the Grok AI feature made offensive posts about Diogo Jota and the Hillsborough and Munich disasters.The posts were generated when users asked the AI tool to make hateful posts about the two football teams.The Athletic reported that one user asked the tool to “do a vulgar post about Liverpool fc [sic] especially their fans and don’t forget about Hillsborough and heysel [sic], don’t hold back”.Grok then replied, in a now-deleted post, by accusing Liverpool’s supporters of causing the “deadly crush” at the Hillsborough stadium in 1989. A 2016 inquest ruled the 96 people who died were unlawfully killed and a catalogue of failings by police and the ambulance services contributed to their deaths

How AI firm Anthropic wound up in the Pentagon’s crosshairs

Until recently, Anthropic was one of the quieter names in the artificial intelligence boom. Despite being valued at about $350bn, it rarely generated the flashy headlines or public backlash associated with Sam Altman’s OpenAI or Elon Musk’s xAI. Its CEO and co-founder Dario Amodei was an industry fixture but hardly a household name outside of Silicon Valley, and its chatbot Claude lagged in popularity behind ChatGPT.That perception has shifted as Anthropic has become the central actor in a high-profile fight with the Department of Defense over the company’s refusal to allow Claude to be used for domestic mass surveillance and autonomous weapons systems that can kill people without human input. Amid tense negotiations, the AI firm rejected a Pentagon deadline for a deal last week, in a move that led Pete Hegseth, the defense secretary, to accuse Anthropic of “arrogance and betrayal” of its home country while demanding that any companies that work with the US government cease all business with the AI firm

AI allows hackers to identify anonymous social media accounts, study finds

AI has made it vastly easier for malicious hackers to identify anonymous social media accounts, a new study has warned.In most test scenarios, large language models (LLMs) – the technology behind platforms such as ChatGPT – successfully matched anonymous online users with their actual identities on other platforms, based on the information they posted.The AI researchers Simon Lermen and Daniel Paleka said LLMs make it cost effective to perform sophisticated privacy attacks, forcing a “fundamental reassessment of what can be considered private online”.In their experiment, the researchers fed anonymous accounts into an AI, and got it to scrape all the information it could. They gave a hypothetical example of a user talking about struggling at school, and walking their dog Biscuit through a “Dolores park”

ChatGPT driving rise in reports of ‘satanic’ organised and ritual abuse, UK experts say

ChatGPT is driving a rise in reports of organised and ritual abuse, UK experts have said, as survivors of “satanic” sexual violence use the AI tool for therapy.Police say organised and ritual abuse, and “witchcraft, spirit possession and spiritual abuse” (WSPRA) against children, is under-reported in the UK. There is no modern-day charge that covers it specifically, but such offending is typified by sexual abuse, violence and neglect involving ritualistic elements – sometimes inspired by satanism, fascism or esoteric religious beliefs – to control victims.Perpetrators include abusive families and networks, human traffickers, online gangs and paedophile rings.There have been 14 UK criminal cases since 1982 in which ritualistic practices in sexual abuse were acknowledged

Current and former Block workers say AI can’t do their jobs after Jack Dorsey’s mass layoffs: ‘You can’t really AI that’

Mark remembers the first time he wondered whether he was teaching Block’s AI tools how to do his job – and maybe even replace him. He was at his fintech company’s extravagant anniversary party last September. As executives led a presentation on the productivity benefits of a new internal AI tool, Mark, who worked in the product department, discussed his worries with colleagues. While he wasn’t sure what would happen in a few years, he told a co-worker sitting next to him that for now, there was no way the technology was so advanced that it could move the business forward without employees like him to help drive vision and strategy.These AI tools were not proactive

Verdict on the start of F1’s new era: five talking points from the Australian GP

Zac Lomax exits limbo via defection as latest NRL star lured by Wallabies jersey | Angus Fontaine

Russia flag raised and national anthem played after first gold at Winter Paralympics

Sky Brown wins second skateboarding world title at rain-hit event in Brazil

England handed tough Six Nations 2027 opener with Friday night trip to Dublin

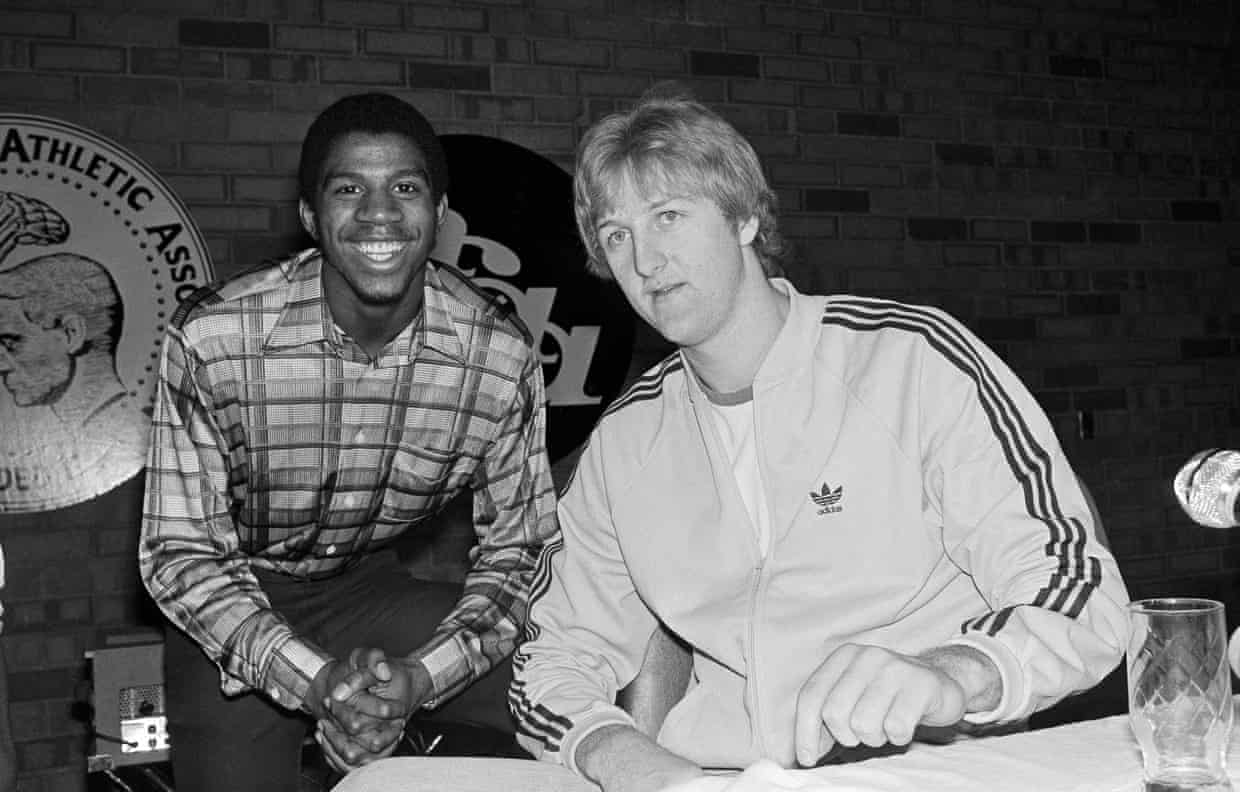

‘He had to shoulder tragedy alone’: how Larry Bird’s rise almost ended before it began