Milka maker milked shoppers over size of chocolate bars, German court rules

Many chocolate lovers consider shrinkflation a serious crime – and they have been vindicated after a German court ruled that the makers of Milka cheated consumers by cutting the bar’s size, while keeping the wrapper the same.The three-week case in a regional court was brought by Hamburg’s consumer protection office. It accused the chocolate brand’s US owner Mondelēz of deceiving shoppers by cutting the weight of Milka’s classic Alpine Milk bar from 100g to 90g without significantly altering the distinctive purple packaging.Shrinkflation, where product sizes are reduced but prices stay the same (or even go up), has become all too common as manufacturers try to offset rising business and ingredient costs.After last year’s changes, the Milka bar was a millimetre thinner and the price increased from €1

Global oil inventories falling at record pace amid Iran war; US producer price inflation hits four-year high – as it happened

Global oil stocks are being run down at a record pace as supply losses mount due to the ongoing Iran war, the International Energy Agency has warned.In its latest outlook report, the IEA reports that global oil inventories fell by 129 million barrels in March, and by a further 117 million barrels in April, as countries dipped into their reserves to cover the shortfall following the Middle East conflict.The IEA, which ordered the largest release of government oil reserves in its history in mid-March, reports:double quotation markMore than ten weeks after the war in the Middle East began, mounting supply losses from the Strait of Hormuz are depleting global oil inventories at a record pace.The IEA also forecasts weaker demand this year, as the jump in prices for crude oil and refined products leads to demand destruction.World oil demand is forecast to contract by 420,000 barrels per day this year, to 104m bpd, which is 1

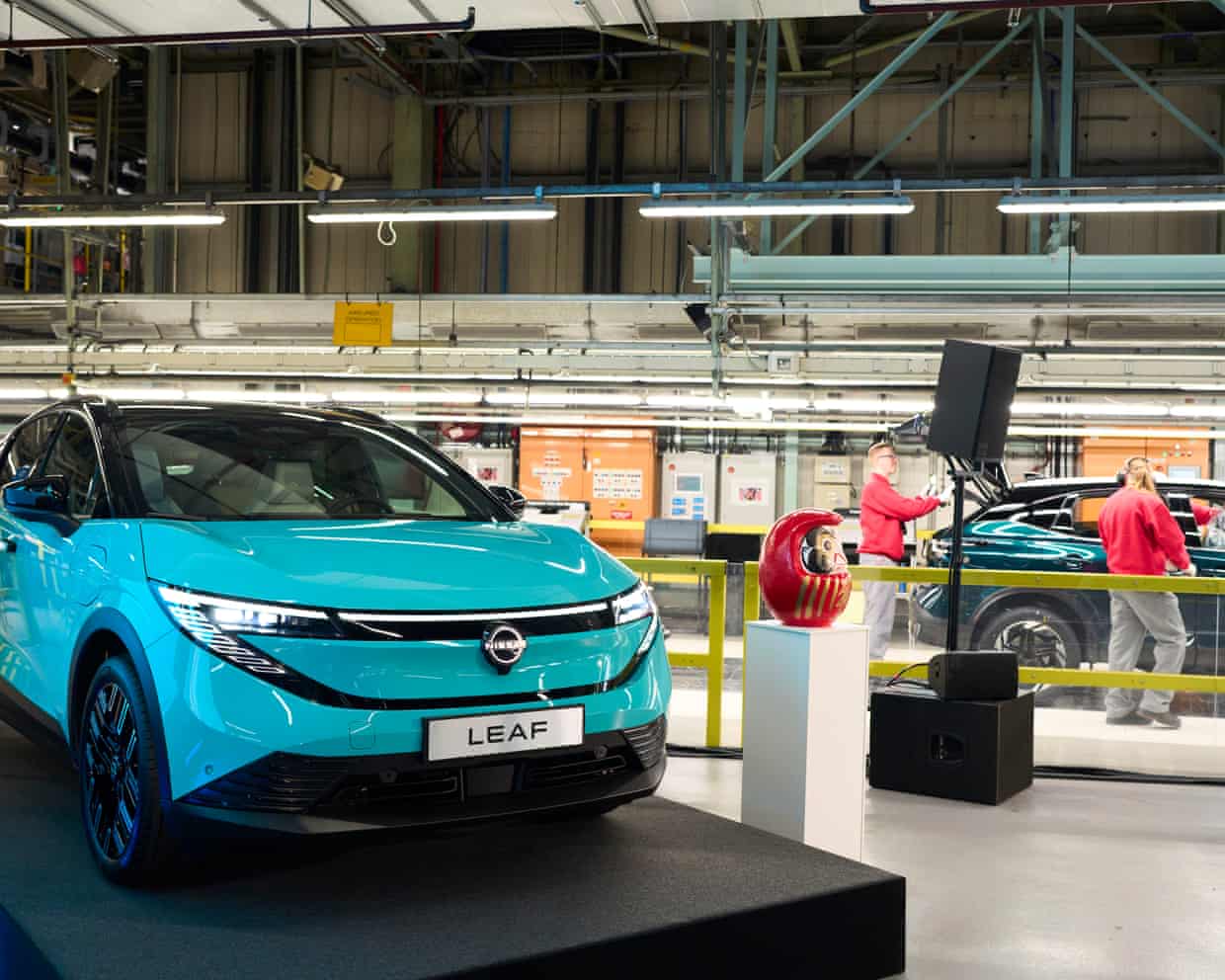

Nissan ponders building cars for Chinese rivals at Sunderland plant

Nissan’s chief executive has confirmed he would consider building cars for other manufacturers at the UK’s largest car factory in Sunderland, amid talks with China’s Chery.Ivan Espinosa said Nissan was “looking at options” for Sunderland and its 6,000 workers as the struggling Japanese carmaker on Wednesday reported steep losses for the year to March.Nissan announced last week it was closing one of its two production lines at Sunderland, in north-east England, because of faltering demand for its vehicles. However, it has held talks to produce vehicles on behalf of Chery, according to industry sources. Chery is pushing aggressively into the UK and Europe with its Chery, Jaecoo and Omoda brands

Lab testing group Intertek to back £10.6bn takeover by Swedish firm EQT

The laboratory testing company Intertek has become the latest FTSE 100 business to agree to a takeover, backing a £10.6bn approach from a private equity firm owned by Sweden’s billionaire Wallenberg family.After rebuffing three previous approaches, Intertek’s board said it was “minded to recommend” the £60-a-share tilt from the Swedish buyout firm EQT to shareholders, if there was a firm offer.The deal is worth £10.6bn including debt, or £9

UK housebuilder Vistry warns of ‘significantly’ lower profits amid Iran war uncertainty

One of the UK’s biggest housebuilders has said its profits will be “significantly” lower, as it was forced to cut prices after heightened uncertainty caused by the US-Israeli war on Iran.Vistry’s shares plunged 10.5% in early trading on Wednesday, hitting their lowest level in nearly 15 years, as it told shareholders its first-half profits would be hit by the fallout from the Middle East conflict.In a stock market update hours before its annual general meeting, the housebuilder, which owns Bovis Homes, Countryside and Linden Homes, said circumstances had changed since it last updated investors in March. It said: “The level of macroeconomic uncertainty has increased, and with it the range of potential outcomes for the current year

How new owner became all powerful in ‘high stakes’ attempt to revive former WH Smith chain

Shoppers at WH Smith were once accustomed to being offered cheap chocolate stacked high at the counter while buying their morning newspaper. Now, the chain’s former high street stores have themselves become the subject of a cut-price deal – as the low-profile investment group that snapped them up appears set to pay less than half of the original cash price.The paperclips to books chain had notched up 233 years on the British high street when it was bought by Modella Capital last summer.In less than a year, the future looks very different for the chain, which was hastily rebranded to TG Jones. First established in Little Grosvenor Street in London by Henry Walton Smith and his wife, Anna, WH Smith grew rapidly in the 19th century, building a newspaper distribution business as the railway network expanded

‘We have the same monster’: three women brought down their rapist – this is what happened next

Did breakthrough in US fentanyl crisis start in China?

Getting children to eat their vegetables starts in the womb, researchers suggest

Older people risk mental decline if they do long hours of caring, UK study shows

Capacity of lifts not kept up with UK obesity levels, study shows

More than 6,000 children treated at obesity clinics in England, figures show